Why most user research fails early-stage teams

User research has a reputation problem in startups. Founders think it means months of ethnographic studies, expensive usability labs, and academic rigor. So they skip it entirely and build on assumptions.

The truth is somewhere in the middle. You don't need a research department. You need five conversations and a structured way to capture what you learn.

The 5-interview framework

Choose the right people

Talk to people who have the problem you're solving, not people who might theoretically have it someday. If you're building a tool for sales teams, talk to salespeople who are actively struggling with the workflow you want to improve.

Ask about behavior, not opinions

"Would you use a product that does X?" is a useless question. People are terrible at predicting their own behavior. Instead, ask: "Tell me about the last time you dealt with [problem]. What did you do? What tools did you use? What was frustrating?"

Look for patterns, not outliers

After five interviews, you'll see patterns. If three out of five people describe the same workaround for the same problem, that's signal. If one person mentions something unique, park it — it might matter later, but it shouldn't drive your MVP.

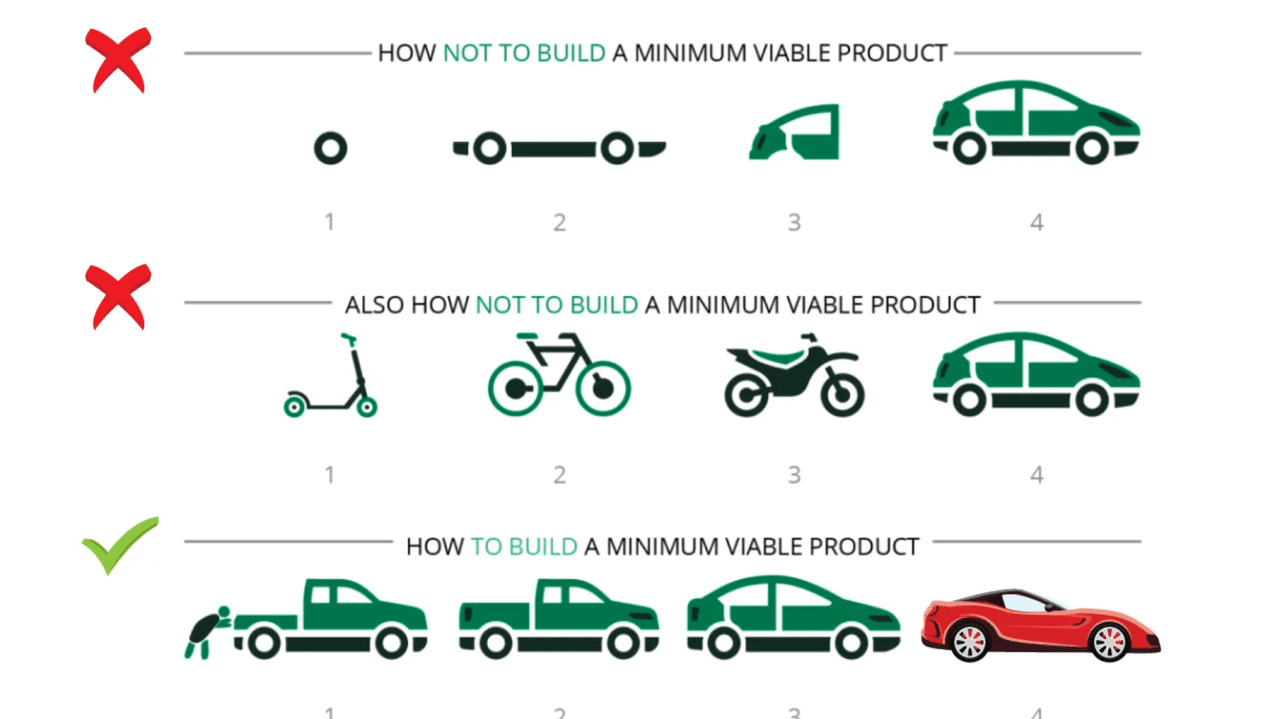

From insights to MVP scope

Map each pattern to a feature hypothesis: "Users currently do [workaround] because [reason]. If we build [feature], they'll [expected behavior]."

This gives you a prioritized list of features grounded in evidence, not intuition. Build the top three, ship them, and measure whether the expected behavior actually happens.

When to research again

Research isn't a phase — it's a habit. After your MVP ships, watch how people actually use it. The gap between expected behavior and actual behavior is where your next insights live.